Fedora Linux 39 - Upgrade

Sunday, November 12. 2023

Twenty years with Fedora Linux. Release 39 is out!

I've ran Red Hat since 90s. Version 3.0.3 was released in 1996 and ever since I've had something from them running. As they scrapped Red Hat Linux in 2003 and went for Fedora Core / RHEL, I've had something from those two running. For the record: I hate those semi-working everything-done-only-half-way Debian/Ubuntu crap. When I want to toy around with something that doesn't quite fit, I choose Arch Linux.

To get your Fedora in-place-upgraded, process is rather simple. Docs are in article Performing system upgrade.

First you make sure existing system is upgraded to all latest stuff with a dnf --refresh upgrade. Then make sure upgrade tooling is installed: dnf install dnf-plugin-system-upgrade. Now you're good to go for download all the new packages with a: dnf system-upgrade download --releasever=39

Note: This workflow has existed for a long time. It is likely to work also in future. Next time all you have to do is replace the release vesion with the one you want to upgrade into.

Now all prep is done and you're good to go for the actual upgrade. To state the obvious: this is the dangerous part. Everything before this has been a warm-up run.

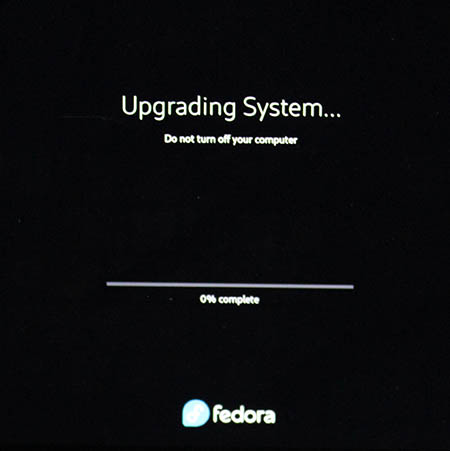

dnf system-upgrade reboot

If you have a console, you'll see the progress.

Installing the packages and rebooting into the new release shouldn't take too many minutes.

When back in, verify: cat /etc/fedora-release ; cat /proc/version

This resulted in my case:

Fedora release 39 (Thirty Nine)

Linux version 6.5.11-300.fc39.x86_64 (mockbuild@d23353abed4340e492bce6e111e27898) (gcc (GCC) 13.2.1 20231011 (Red Hat 13.2.1-4), GNU ld version 2.40-13.fc39) #1 SMP PREEMPT_DYNAMIC Wed Nov 8 22:37:57 UTC 2023

Finally, you can do optional clean-up: dnf system-upgrade clean && dnf clean packages

That's it! You're done for at least for next 6 months.

Bare Bones Software - BBEdit - Indent with tab

Saturday, October 28. 2023

As a software developer I've pretty much used all text editors there is. Bold statement. Fact remains there aren't that many of them commonly used.

On Linux, I definitely use Vim, rarely Emacs, almost never Nano (any editor requiring Ctrl-s over SSH is crap!).

On Windows, mostly Notepad++ rarely Windows' own Notepad. Both use Ctrl-s, but won't work over SSH-session.

On Mac, mostly BBEdit.

Then there is the long list of IDEs available on many platforms I've used or am still using to work with multiple programming languages and file formats. Short list with typical ones would be: IntelliJ and Visual Studio.

Note: VScode is utter crap! People who designed VScode were high on drugs or plain old-fashioned idiots. They have no clue what a developer needs. That vastly overrated waste-of-disc-space a last resort editor for me.

BBEdit 14 homepage states:

It doesn’t suck.®

Oh, but it does! It can be made less sucky, though.

Here is an example:

In above example, I'm editing a JSON-file. It happens to be in a rather unreadable state and sprinkling bit of indent on top of it should make the content easily readable.

Remember, earlier I mentioned a long list of editors. Virtually every single one of those has functionality to highlight a section of text and indent selection by pressing Tab. Not BBEdit! It simply replaces entire selection with a tab-character. Insanity!

Remember the statement on not sucking? There is a well-hidden option:

The secret option is called Allow Tab key to indent text blocks and it was introduced in version 13.1.2. Why this isn't default ... correction: Why this wasn't default behaviour from get-go is a mystery.

Now the indention works as expected:

What puzzles me is the difficulty of finding the option for indenting with a Tab. I googled wide & far. No avail. Those technical writers @ Barebones really should put some effort on making this option better known.

Nuvoton NCT6793D lm_sensors output

Monday, July 3. 2023

LM-Sensors is set of libraries and tools for accessing your Linux server's motheboard sensors. See more @ https://github.com/lm-sensors/lm-sensors.

If you're ever wondered why in Windows it is tricky to get readings from your CPU-fan rotation speed or core temperatures from you fancy GPU without manufacturer utilities. Obviously vendors do provide all the possible readings in their utilities, but people who would want to read, record and store the data for their own purposes, things get hairy. Nothing generic exists and for unknown reason, such API isn't even planned.

In Linux, The One toolkit to use is LM-Sensors. On kernel side, there exists The Linux Hardware Monitoring kernel API. For this stack to work, you also need a kernel module specific to your motherboard providing the requested sensor information via this beautiful API. It's also worth noting, your PC's hardware will have multiple sensors data providers. An incomplete list would include: motherboard, CPU, GPU, SSD, PSU, etc.

Now that sensors-detect found all your sensors, confirm sensors will output what you'd expect it to. In my case there was a major malfunction. On a boot, following thing happened when system started sensord (in case you didn't know, kernel-stuff can be read via dmesg):

systemd[1]: Starting lm_sensors.service - Hardware Monitoring Sensors...

kernel: nct6775: Enabling hardware monitor logical device mappings.

kernel: nct6775: Found NCT6793D or compatible chip at 0x2e:0x290

kernel: ACPI Warning: SystemIO range 0x0000000000000295-0x0000000000000296 conflicts with OpRegion 0x0000000000000290-0x0000000000000299 (_GPE.HWM) (20221020/utaddress-204)

kernel: ACPI: OSL: Resource conflict; ACPI support missing from driver?

systemd[1]: Finished lm_sensors.service - Hardware Monitoring Sensors.

This conflict resulted in no available mobo readings! NVMe, GPU and CPU-cores were ok, the part I was mostly looking for was fan RPMs and mobo temps just to verify my system health. No such joy. ![]() Uff.

Uff.

It seems, this particular Linux kernel module has issues. Or another way to state it: mobo manufacturers have trouble implementing Nuvoton chip into their mobos. On Gentoo forums, there is a helpful thread: [solved] nct6775 monitoring driver conflicts with ACPI

Disclaimer: For ROG Maximus X Code -mobo adding acpi_enforce_resources=no into kernel parameters is the correct solution. Results will vary depending on what mobo you have.

Such ACPI-setting can be permanently enforced by first querying about the Linux kernel version being used (I run a Fedora): grubby --info=$(grubby --default-index). The resulting kernel version can be updated by: grubby --args="acpi_enforce_resources=no" --update-kernel DEFAULT. A reboot shows fix in effect, ACPI Warning is gone and mobo sensor data can be seen.

As a next step you'll need userland tooling to interpret the raw data into human-readable information with semantics. A new years back, I wrote about Improving Nuvoton NCT6776 lm_sensors output. It's mainly about bridging the flow of zeros and ones into something having meaning to humans. This is my LM-Sensors configuration for ROG Maximus X Code:

chip "nct6793-isa-0290"

# 1. voltages

ignore in0

ignore in1

ignore in2

ignore in3

ignore in4

ignore in5

ignore in6

ignore in7

ignore in8

ignore in9

ignore in10

ignore in11

label in12 "Cold Bug Killer"

set in12_min 0.936

set in12_max 2.613

set in12_beep 1

label in13 "DMI"

set in13_min 0.550

set in13_max 2.016

set in13_beep 1

ignore in14

# 2. fans

label fan1 "Chassis fan1"

label fan2 "CPU fan"

ignore fan3

ignore fan4

label fan5 "Ext fan?"

# 3. temperatures

label temp1 "MoBo"

label temp2 "CPU"

set temp2_max 90

set temp2_beep 1

ignore temp3

ignore temp5

ignore temp6

ignore temp9

ignore temp10

ignore temp13

ignore temp14

ignore temp15

ignore temp16

ignore temp17

ignore temp18

# 4. other

set beep_enable 1

ignore intrusion0

ignore intrusion1

I'd like to credit Mr. Peter Sulyok on his work about ASRock Z390 Taichi. This mobo happens to use the same Nuvoton NCT6793D -chip for LPC/eSPI SI/O (I have no idea what those acronyms are for, I just copy/pasted them from the chip data sheet). The configuration is in GitHub for everybody to see: https://github.com/petersulyok/asrock_z390_taichi

Also, I''d like to state my ignorance. After reading less than 500 pages of the NCT6793D data sheet, I have no idea what is:

- Cold Bug Killer voltage

- DMI voltage

- AUXTIN1 is or exactly what temperature measurement it serves

- PECI Agent 0 temperature

- PECI Agent 0 Calibration temperature

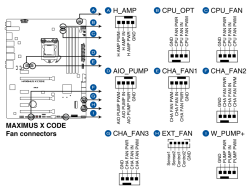

Remember, I did mention semantics. From sensors-command output I can read a reading, what it translates into, no idea! Luckily there are some of the readings seen are easy to understand and interpret. As an example, fan RPMs are really easy to measure by removing the fan from its connector. Here is an excerpt from my mobo manual to explain fan-connectors:

As data quality is taken care of and output is meaningful, next step is to start recording data. In LM-Sensors, there is sensord for that. It is a system service taking a snapshot (you can define the frequency) and storing it for later use. I'll enrich the stored data points with system load averages, this enables me to estimate a relation with high temperatures and/or fan RPMs with how much my system is working.

Finally, all data gathered into a RRDtool database can be easily visualized with rrdcgi into HTML + set of PNG-images to present a web page like this:

Nice!

AMD Software: Adrenalin Edition - Error 202

Saturday, April 29. 2023

GPU drivers are updated often. The surprise comes from the fact, the update fails. What! Why! How is this possible?

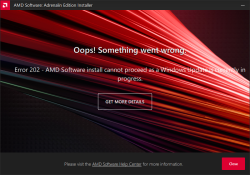

Error 202 – AMD Software Installer Cannot Proceed as a Windows Update Is Currently in Progress

Making sure, this isn't a fluke. I did retry. A few times, actually. Nope. Didn't help.

I'm not alone with this. In AMD community, there is a thread Error 202 but there's no pending Windows Update. Also, on my Windows, there was nothing pending:

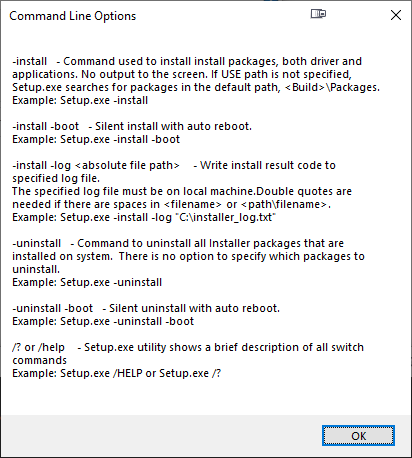

As this was the first time I had a hiccup, I realized I knew nothing about the installer. Always on Windows, it pays off to run setup.exe with switch /?. This is what will be displayed:

Haa! Options. Going for: .\Setup.exe -install -boot -log inst.log

After, the expected failure, log reveals:

InstallMan::isDisplayDriverEligibleToInstall :6090 Display driver Eligible to Install

isWindowsServiceStopped :4102 Queried Windows Update Status: 4

pauseWindowsUpdate :5244 drvInst.exe is currently running

InstallMan::performMyState :5177 ERROR --- InstallMan -> Caught an AMD Exception. Error code to display to user: 202. Debug hint: "drvInst.exe is currently running"

No idea where the claim of drvInst.exe is currently running comes from. It isn't! Obviously something is reading Windows Update status wrong. Let's see what'll happen if I'll bring the Windows Update service down with a simple PowerShell-command: Stop-Service -Name wuauserv

Ta-daa! It works! Now installation will complete.

File 'repomd.xml' from repository is unsigned

Thursday, March 23. 2023

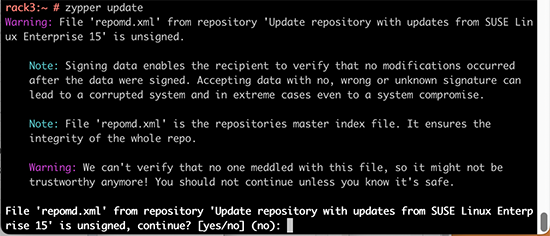

For all those years I've been running SUSE-Linux, I've never bumped into this one while running a trivial zypper update:

Warning: File 'repomd.xml' from repository 'Update repository with updates from SUSE Linux Enterprise 15' is unsigned.

Note: Signing data enables the recipient to verify that no modifications occurred

after the data were signed. Accepting data with no, wrong or unknown signature can

lead to a corrupted system and in extreme cases even to a system compromise.

Note: File 'repomd.xml' is the repositories master index file. It ensures the

integrity of the whole repo.

Warning: We can't verify that no one meddled with this file, so it might not be

trustworthy anymore! You should not continue unless you know it's safe.

continue? [yes/no] (no):

This error slash warning being weird and potentially dangerous, my obvious reaction was to hit ctrl-c and go investigate. First, my package verification mechanism should be intact and be able to verify if updates downloaded are unaltered or not. Second, there should have not been any breaking changes into my system, at least I didn't make any. As my system didn't seem to breached, I assumed a system malfunction and went investigating.

Quite soon, I learned this is less than rare event. It has happeded multiple times for other people. According to article Signature verification failed for file ‘repomd.xml’ from repository ‘openSUSE-Leap-42.2-Update’ there exists a simple fix.

By running two command zypper clean --all and zypper ref, the problem should dissolve.

Yes, that is the case. After a simple wash/clean/rinse -cycle zypper update worked again.

It was just weird to bump into that for the first time I'd assume this would have occurred some time earlier.

Writing a secure Systemd daemon with Python

Sunday, March 5. 2023

This is a deep dive into systems programing using Python. For those unfamiliar with programming, systems programming sits on top of hardware / electronics design, firmware programming and operating system programming. However, it is not applications programming which targets mostly end users. Systems programming targets the running system. Mr. Yadav of Dark Bears has an article Systems Programming is Hard to Do – But Somebody’s Got to Do it, where he describes the limitations and requirements of doing so.

Typically systems programming is done with C, C++, Perl or Bash. As Python is gaining popularity, I definitely want to take a swing at systems programming with Python. In fact, there aren''t many resources about the topic in the entire Internet.

Requirements

This is the list of basic requirements I have for a Python-based system daemon:

- Run as service: Must run as a Linux daemon, https://man7.org/linux/man-pages/man7/daemon.7.html

- Start runing on system boot and stop running on system shutdown

- Modern: systemd-compatible, https://systemd.io/

- Not interested in ancient SysV init support anymore, https://danielmiessler.com/study/the-difference-between-system-v-and-systemd/

- Modern: D-bus -connected

- Service provided will have an interface on system D-bus, https://www.freedesktop.org/wiki/Software/dbus/

- All Linux systems are built on top of D-bus, I absolutely want to be compatible

- Monitoring: Must support systemd watchdog, https://0pointer.de/blog/projects/watchdog.html

- Surprisingly many out-of-box Linux daemons won't support this. This is most likely because they're still SysV-init based and haven't modernized their operation.

- I most definitely want to have this feature!

- Security: Must use Linux capabilities to run only with necessary permissions, https://man7.org/linux/man-pages/man7/capabilities.7.html

- Security: Must support SElinux to run only with required permissions, https://github.com/SELinuxProject/selinux-notebook/blob/main/src/selinux_overview.md

- Isolation: Must be independent from system Python

- venv

- virtualenv

- Any possible changes to system Python won't affect daemon or its dependencies at all.

- Modern: Asynchronous Python

- Event-based is the key to success.

- D-bus and systemd watchdog pretty much nail this. Absolutely must be asynchronous.

- Packaging: Installation from RPM-package

- This is the only one I'll support for any forseeable future.

- The package will contain all necessary parts, libraries and dependencies to run a self-contained daemon.

That's a tall order. Selecting only two or three of those are enough to add tons of complexity to my project. Also, I initially expected somebody else in The Net to be doing this same or something similar. Looks like I was wrong. Most systems programmers love sticking to their old habits and being at SysV-init state with their synchronous C / Perl -daemons.

Scope / Target daemon

I've previously blogged about running an own email server and fighting spam. Let's automate lot of those tasks and while automating, create a Maildir monitor of junk mail -folder.

This is the project I wrote for that purpose: Spammer Blocker

Toolkit will query for AS-number of spam-sending SMTP-server. Typically I'll copy/paste the IP-address from SpamCop's report and produce a CIDR-table for Postfix. The table will add headers to email to-be-stored so that possible Procmail / Maildrop can act on it, if so needed. As junk mail -folder is constantly monitored, any manually moved mail will be processed also.

Having these features bring your own Linux box spam handling capabilities pretty close to any of those free-but-spy-all -services commonly used by everybody.

Addressing the list of requirements

Let's take a peek on what I did to meet above requirements.

Systemd daemon

This is nearly trivial. See service definition in spammer-reporter.service.

What's in the file is your run-of-the-mill systemd service with appropriate unit, service and install -definitions. That triplet makes a Linux run a systemd service as a daemon.

Python venv isolation

For any Python-developer this is somewhat trivial. You create the environment box/jail, install requirements via setup.py and that's it. You're done. This same isolation mechanism will be used later for packaging and deploying the ready-made daemon into a system.

What's missing or to-do is start using a pyproject.toml. That is something I'm yet to learn. Obviously there is always something. Nobody, nowhere is "ready". Ever.

Asynchronous code

Talking to systemd watchdog and providing a service endpoint on system D-bus requires little bit effort. Read: lots of it.

To get a D-bus service properly running, I'll first become asynchronous. For that I'll initiate an event-loop dbus.mainloop.glib. As there are multiple options for event loop, that is the only one actually working. Majority of Python-code won't work with Glib, they need asyncio. For that I'll use asyncio_glib to pair the GLib's loop with asyncio. It took me while to learn and understand how to actually achieve that. When successfully done, everything needed will run in a single asynchronous event loop. Great success!

With solid foundation, As the main task I'll create an asynchronous task for monitoring filesystem changes and run it in a forever-loop. See inotify(7) for non-Python command. See asyncinotify-library for more details of the Pythonic version. What I'll be monitoring is users' Maildirs configured to receive junk/spam. When there is a change, a check is made to see if change is about new spam received.

For side tasks, there is a D-bus service provider. If daemon is running under systemd also the required watchdog -handler is attached to event loop as a periodic task. Out-of-box my service-definition will state max. 20 seconds between watchdog notifications (See service Type=notify and WatchdogSec=20s). In daemon configuration file spammer-reporter.toml, I'll use 15 seconds as the interval. That 5 seconds should be plenty of headroom.

Documentation of systemd.service for WatchdogSec states following:

If the time between two such calls is larger than the configured time, then the service is placed in a failed state and it will be terminated

For any failed service, there is the obvious Restart=on-failure.

If for ANY possible reason, the process is stuck, systemd scaffolding will take control of the failure and act instantly. That's resiliency and self-healing in my books!

Security: Capabilities

My obvious choice would be to not run as root. As my main task is to provide a D-bus service while reading all users' mailboxes, there is absolutely no way of avoiding root permissions. Trust me! I tried everything I could find to grant nobody's process with enough permissions to do all that. No avail. See the documenatiion about UID / GID rationale.

As standard root will have waaaaaay too much power (especially if mis-applied) I'll take some of those away. There is a yet rarely used mechanism of capabilities(7) in a Linux. My documentation of what CapabilityBoundingSet=CAP_AUDIT_WRITE CAP_DAC_READ_SEARCH CAP_IPC_LOCK CAP_SYS_NICE means in system service definition is also in the source code. That set grants the process for permission to monitor, read and write any users' files.

There are couple of other super-user permissions left. Most not needed powers a regular root would have are stripped, tough. If there is a security leak and my daemon is used to do something funny, quite lot of the potential impact is already mitigated as the process isn't allowed to do much.

Security: SElinux

For even more improved security, I do define a policy in spammer-block_policy.te. All my systems are hardened and run SElinux in enforcing -mode. If something leaks, there is a limiting box in-place already. In 2014, I wrote a post about a security flaw with no impact on a SElinux-hardened system.

The policy will allow my daemon to:

- read and write FIFO-files

- create new unix-sockets

- use STDIN, STDOUT and STDERR streams

- read files in

/etc/, note: read! not write - read I18n (or internationalization) files on the system

- use capabilities

- use TCP and UDP -sockets

- acess D-bus -sockets in

/run/ - access D-bus watchdog UDP-socket

- access user

passwdinformation on the system via SSSd - read and search user's home directories as mail is stored in them, note: not write

- send email via SMTPd

- create, write, read and delete temporary files in

/tmp/

Above list is a comprehensive requirement of accesses in a system to meet the given task of monitoring received emails and act on determined junk/spam. As the policy is very carefully crafted not to allow any destruction, writing, deletion or manging outside /tmp/, in my thinking having such a hardening will make the daemon very secure.

Yes, in /tmp/, there is stuff that can be altered with potential security implications. First you have to access the process. While hacking the daemon, make sure to keep the event-loop running or systemd will zap the process within next 20 seconds or less. I really did consider quite a few scenarios if, and only if, something/somebody pops the cork on my daemon.

RPM Packaging

To wrap all of this into a nice packages, I'm using rpmenv. This toolkit will automatically wrap anything needed by the daemon into a nice virtualenv and deploy that to /usr/libexec/spammer-block/. See rpm.json for details.

SElinux-policy has an own spammer-block_policy_selinux.spec. Having these two in separate packages is mandatory as the mechanisms to build the boxes are completely different. Also, this is the typical approach on other pieces of software. Not everybody has strict requirements to harden their systems.

Where to place an entire virtualenv in a Linux? That one is a ball-buster. RPM Packaging Guide really doesn't say how to handle your Python-based system daemons. Remember? Up there ↑, I tried explaining how all of this is rather novel and there isn't much information in The Net regarding this. However, I found somebody asking What is the purpose of /usr/libexec? on Stackexchange and decided that libexec/ is fine for this purpose. I do install shell wrappers into /bin/ to make everybody's life easier. Having entire Python environment there wouldn't be sensible.

Final words

Only time will tell if I made the right design choices. I totally see Python and Rust -based deamons gaining popularity in the future. The obvious difference is Rust is a compiled language like Go, C and C++. Python isn't.

ChromeOS Flex test drive

Monday, October 10. 2022

Would you like to run an operating system which ships as-is, no changes allowed after installation? Can you imagine your mobile phone without apps of your own choosing? Your Windows10 PC? Your macOS Monterey? Most of us cannot.

As a computer enthusiast, of course I had to try such a feat!

Prerequisites

How to get your ball rolling, check out Chrome OS Flex installation guide @ Google. What you'll need is a supported hardware. In the installation guide, there is a certified models list and it will contain a LOT of supported PC and Mac models. My own victim/subject/target was 12 year old Lenovo and even that is in the certified list! WARNING: The hard drive of the victim computer will be erased beyond data recovery.

The second thing you'll need is an USB-stick to boot your destination ChromeOS. Any capacity will do. I had a 32 GiB stick and it used 128 MiB of it. That's less than 1% of the capacity. So, any booting stick will do the trick for you. Also, you won't be needing the stick after install, requirement is to just briefly slip an installer into it, boot and be done.

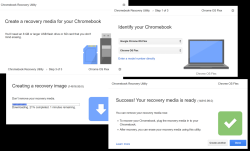

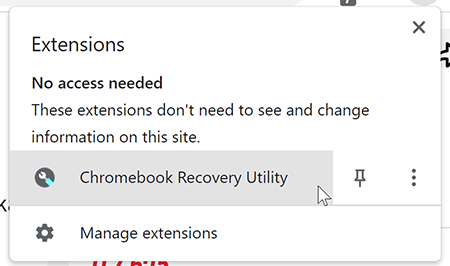

Third and final thing you'll be needing is a Google Chrome browser and ChromeOS recovery media extension into it:

To clarify, let's repeat that:

Your initial installation into your USB-stick will be done from Google Chrome browser using a specific extension in it.

Yes. It sounds bit unorthodox or different than other OS does. Given Google's reach on web browser users, that seemed like the best idea. This extension will work in any OS.

To log into your ChromeOS, you will need a Google Account. Most people on this planet do have one, so most likely you're covered. On the other hand, if your religious beliefs are strongly anti-Google, the likelihood of you running an opearting system made by Google is low. You rare person won't be able to log in, but everybody else will.

Creating installation media

That's it. As there won't be much data on the stick, the creation flys by fast!

Installing ChromeOS Flex

If media creation was fast, this will be even faster.

Just boot the newly crated stick and that's pretty much it. The installer won't store much information to the drive, so you will be done in a jiffy.

Running ChromeOS Flex

Log into the machine with your Google Account. Remenber: This OS won't work without a network connection. You really really will need active network connection to use this one.

All you have is set of Google's own apps: Chrome, Gmail, YouTube and such. By looking at the list in Find apps for your Chromebook, you'd initially think all is good and you can have our favorite apps in it. To make your (mis)belief stronger, even Google Play is there for you to run and search apps. Harsh reality sets in quite fast: you can not install anything via Google Play. All the apps in Google Play are for Android or real ChromeOS, not for Flex. Reason is obvious: your platform is running AMD-64 CPU and all the apps are for ARM. This may change in the future, but at the time of writing it is what it is.

You lose a lot, but there is something good in this trade-off. As you literally can not install anything, not even malware can be installed. ChromeOS Flex has to be the safest OS ever made! Most systems in the world are manufactured from ground up to be generic and be able to run anything. This puppy isn't.

SSH

After initial investigation, without apps, without password manager, without anything, I was about to throw the laptop back to its original dust gathering duty. What good is a PC which runs a Chrome browser and nothing else? Then I found the terminal. It won't let you to actually enter the shell of our ChromeOS laptop. It will let you SSH to somewhere else.

On my own boxes, I'll always deactivate plaintext passwords, so I bumped into a problem. From where do I get the private key for SSH-session? Obvious answer is either via Google Drive (<shivers>) or via USB-stick. You can import a key to the laptop and not worry about that anymore.

Word of caution: Think very carefully if you want to store your private keys in a system managed for you by Google.

Biggest drawbacks

For this system to be actually usable, I'd need:

- Proper Wi-Fi. This 12 year old laptop had only Wi-Fi 4 (802.11n)

- This I managed to solve by using an Asus USB-AC51 -dongle to get Wi-Fi 5.

- lsusb:

ID 0b05:17d1 ASUSTek Computer, Inc. AC51 802.11a/b/g/n/ac Wireless Adapter [Mediatek MT7610U] - This won't solve my home network's need for Wi-Fi 6, but gets me to The Net.

- There is no list of supported USB-devices. I have bunch of 802.11ac USB-sticks and this is the only one to work in ChromeOS Flex.

- My password manager and passwords in it

- No apps means: no apps, not even password manager

- What good is a browser when you cannot log into anything. All my passwords are random and ridiculously complex. They were not designed to be remembered nor typed.

- In The world's BEST password advice, Mr. Horowitz said: "The most secure Operating System most people have access to is a Chromebook running in Guest Mode."

Nuisances

Installer won't let you change keyboard layout. If you have US keyboard, fine. If you don't, it sucks for you.

Partitions

As this is a PC, the partition table has EFI boot. Is running EXT-4 and EXT-2 partitions. Contains encrypted home drive. It's basically a hybrid between an Android phone and a Linux laptop.

My 240 GiB SSD installed as follows:

Partition Table: gpt

Disk Flags:

Number Start End Size File system Name Flags

11 32.8kB 33.3kB 512B RWFW

6 33.3kB 33.8kB 512B KERN-C chromeos_kernel

7 33.8kB 34.3kB 512B ROOT-C

9 34.3kB 34.8kB 512B reserved

10 34.8kB 35.3kB 512B reserved

2 35.3kB 16.8MB 16.8MB KERN-A chromeos_kernel

4 16.8MB 33.6MB 16.8MB KERN-B chromeos_kernel

8 35.7MB 52.4MB 16.8MB ext4 OEM

12 52.4MB 120MB 67.1MB fat16 EFI-SYSTEM boot, legacy_boot, esp

5 120MB 4415MB 4295MB ext2 ROOT-B

3 4415MB 8709MB 4295MB ext2 ROOT-A

1 8709MB 240GB 231GB ext4 STATE

Finally

This is either for explorers who want to try stuff out or alternatively for people whose needs are extremely limited. If all you do is surf the web or YouTube then this might be for you. Anything special --> forget about it.

The best part with this is the price. I had the old laptop already, so cost was $0.

MacBook Pro - Fedora 36 sleep wake - part 2

Friday, September 30. 2022

This topic won't go away. It just keeps bugging me. Back in -19 I wrote about GPE06 and couple months ago I wrote about sleep wake. As there is no real solution in existence and I've been using my Mac with Linux, I've come to a conclusion they are in fact the same problem.

When I boot my Mac, log into Linux and observe what's going on. Following CPU-hog can be observed in top:

RES SHR S %CPU %MEM TIME+ COMMAND

0 0 I 41.5 0.0 2:01.50 [kworker/0:1-kacpi_notify]

ACPI-notify will chomp quite a lot of CPU. As previously stated, all of this will go to zero if /sys/firmware/acpi/interrupts/gpe06 would be disabled. Note how GPE06 and ACPI are intertwined. They do have a cause and effect.

Also, doing what I suggested earlier to apply acpi=strict noapic kernel arguments:

grubby --args="acpi=strict noapic" --update-kernel=$(ls -t1 /boot/vmlinuz-*.x86_64 | head -1)

... will in fact reduce GPE06 interrupt storm quite a lot:

RES SHR S %CPU %MEM TIME+ COMMAND

0 0 I 10.0 0.0 0:22.92 [kworker/0:1-kacpi_notify]

Storm won't be removed, but drastically reduced. Also, the aluminium case of MBP will be a lot cooler.

However, by running grubby, the changes won't stick. Fedora User Docs, System Administrator’s Guide, Kernel, Module and Driver Configuration, Working with the GRUB 2 Boot Loader tells following:

To reflect the latest system boot options, the boot menu is rebuilt automatically when the kernel is updated or a new kernel is added.

Translation: When you'll install a new kernel. Whatever changes you did with grubby won't stick to the new one. To make things really stick, edit file /etc/default/grub and have line GRUB_CMDLINE_LINUX contain these ACPI-changes as before: acpi=strict noapic

Many people are suffering from this same issue. Example: Bug 98501 - [i915][HSW] ACPI GPE06 storm

Even this change won't fix the problem. Lot of CPU-resources are still wasted. When you close the lid for the first time and open it again, this GPE06-storm miraculously disappears. Also what will happen, your next lid open wake will take couple of minutes. It seems the entire Mac is stuck, but it necessarily isn't (sometimes it really is). It just takes a while for the hardware to wake up. Without noapic, it never will. Also, reading the Freedesktop bug-report, there is a hint of problem source being Intel i915 GPU.

Hopefully somebody would direct some development resources to this. Linux on a Mac hardware runs well, besides these sleep/sleep-wake issues.

MacBook Pro - Fedora 36 sleep wake

Thursday, August 25. 2022

Few years back I wrote about running Linux on a MacBook Pro. An year ago, the OpenSuse failed to boot on the Mac. Little bit of debugging, I realized the problem isn't in the hardware. That particular kernel update simply didn't work on that particular hardware anymore. Totally fair, who would be stupid enough to attempt using 8 years old laptop. Well, I do.

There aren't that many distros I use and I always wanted to see Fedora Workstation. It booted from USB and also, unlike OpenSuse Leap, it also booted installed. So, ever since I've been running a Fedora Workstation on encrypted root drive.

One glitch, though. It didn't always sleep wake. Quite easily, I found stories of a MBP not sleeping. Here's one: Macbook Pro doesn't suspend properly. Unlike that 2015 model, this 2013 puppy slept ok, but had such deep state, it had major trouble regaining consciousness. Pressing the power for 10 seconds obviously recycled power, but it always felt too much of a cannon for simple task.

Checking what ACPI has at /proc/acpi/wakeup:

Device S-state Status Sysfs node

P0P2 S3 *enabled pci:0000:00:01.0

PEG1 S3 *disabled

EC S4 *disabled platform:PNP0C09:00

GMUX S3 *disabled pnp:00:03

HDEF S3 *disabled pci:0000:00:1b.0

RP03 S3 *enabled pci:0000:00:1c.2

ARPT S4 *disabled pci:0000:02:00.0

RP04 S3 *enabled pci:0000:00:1c.3

RP05 S3 *enabled pci:0000:00:1c.4

XHC1 S3 *enabled pci:0000:00:14.0

ADP1 S4 *disabled platform:ACPI0003:00

LID0 S4 *enabled platform:PNP0C0D:00

For those had-to-sleep -cases, disabling XHC1 and LID0 did help, but made wakeup troublesome. While troubleshooting my issue, I did try if disabling XHC1 and/or LID0 would a difference. It didn't.

Also, I found it very difficult to find any detailed information on what those registered ACPI wakeup -sources translate into. Lid is kinda obvious, but rest remain relatively unknown.

While reading System Sleep States from Intel, a thought occurred to me. Let's make this one hibernate to see if that would work. Sleep semi-worked, but I wanted to see if hibernate was equally unreliable.

Going for systemctl hibernate didn't quite go as well as I expected. It simply resulted in an error of: Failed to hibernate system via logind: Not enough swap space for hibernation

With free, the point was made obvious:

total used free shared buff/cache available

Mem: 8038896 1632760 2424492 1149792 3981644 4994500

Swap: 8038396 0 8038396

For those not aware: Modern Linux systems don't have swap anymore. They have zram instead. If you're really interested, go study zram: Compressed RAM-based block devices.

To verify the previous, running zramctl displayed prettyy much the above information in form of:

NAME ALGORITHM DISKSIZE DATA COMPR TOTAL STREAMS MOUNTPOINT

/dev/zram0 lzo-rle 7.7G 4K 80B 12K 8 [SWAP]

I finally gave up on that by bumping into article Supporting hibernation in Workstation ed., draft 3. It states following:

The Fedora Workstation working group recognizes hibernation can be useful, but due to impediments it's currently not practical to support it.

Ok ok ok. Got the point, no hibernate.

Looking into sleep wake issue more, I bumped into this thread Ubuntu Processor Power State Management. There a merited user Toz suggested following:

It may be a bit of a stretch, but can you give the following kernel parameters a try:

acpi=strictnoapic

I had attempted lots of options, that didn't sound that radical. Finding the active kernel file /boot/vmlinuz-5.18.18-200.fc36.x86_64, then adding mentioned kernel arguments to GRUB2 with: grubby --args=acpi=strict --args=noapic --update-kernel=vmlinuz-5.18.18-200.fc36.x86_64

... aaand a reboot!

To my surprise, it improved the situation. Closing the lid and opening it now works robust. However, that does not solve the problem where battery is nearly running out and I plug the Magsafe. Any power input to the system taints sleep and its back to deep freeze. I'm happy about the improvement, tough.

This is definitely a far fetch, but still: If you have an idea how to fix Linux sleep wake on an ancient Apple-hardware, drop me a comment. I'll be sure to test it out.

ArchLinux - Pacman - GnuPG - Signature trust fail

Wednesday, July 27. 2022

In ArchLinux, this is what happens too often when you're running simple upgrade with pacman -Syu:

error: libcap: signature from "-an-author-" is marginal trust

:: File /var/cache/pacman/pkg/-a-package-.pkg.tar.zst is corrupted (invalid or corrupted package (PGP signature)).

Do you want to delete it? [Y/n] y

error: failed to commit transaction (invalid or corrupted package)

Errors occurred, no packages were upgraded.

This error has occurred multiple times since ever and by googling, it has a simple solution. Looks like the solution went sour at some point. Deleting obscure directories and running pacman-key --init and pacman-key --populate archlinux won't do the trick. I tried that fix, multiple times. Exactly same error will be emitted.

Correct way of fixing the problem is running following sequence (as root):

paccache -ruk0

pacman -Syy archlinux-keyring

pacman-key --populate archlinux

Now you're good to go for pacman -Syu and enjoy upgraded packages.

Disclaimer:

I'll give you really good odds for above solution to go eventually rot. It does work at the time of writing with archlinux-keyring 20220713-2.

Post-passwords life: Biometrics for your PC

Monday, July 4. 2022

Last year I did a few posts about passwords, example. The topic is getting worn out as we have established the fact about passwords being a poor means of authentiaction, how easily passwords leak from unsuspecting user to bad people and how you really should be using super-complex passwords which are stored in a vault. Personally I don't think there are many interesting password avenues left to explore.

This year my sights are set into life after passwords: how are we going to authenticate ourselves and what we need to do to get there.

Biometrics. A "password" everybody of us carries everywhere and is readily available to be used. Do the implementation wrong, leak that "password" and that human will be in big trouble. Biometric "password" isn't so easy to change. Impossible even (in James Bond movies, maybe). Given all the potential downsides, biometrics still beats traditional password in one crucial point: physical distance. To authenticate with biometrics you absolutely, positively need to be near the device you're about to use. A malicious cracker from other side of the world won't be able to brute-force their way trough authentication unless they have your precious device at their hand. Even attempting any hacks remotely is impossible.

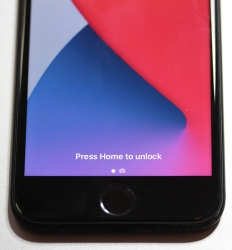

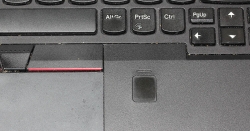

While eyeballing some of the devices and computers I have at hand:

The pics are from iPhone 7, MacBook Pro and Lenovo T570. Hardware that I use regularily, but enter password rarely. There obviously exists other types of biometrics and password replacements, but I think you'll catch the general idea of life after passwords.

Then, looking at the keyboard of my gaming PC:

Something I use on daily basis, but it really puzzles me why Logitech G-513 doesn't have the fingerprint reader like most reasonable computer appliance does. Or generally speaking, if not on keyboard could my self assembled PC have a biometric reader most devices do. Why must I suffer from lack of simple, fast and reliable method of authentication? Uh??

Back-in-the-days fingerprint readers were expensive, bulky devices weren't that accurate and OS-support was mostly missing and injected via modifying operating system files. Improvements on this area is something I'd credit Apple for. They made biometric authentication commonly available for their users, when it became popular and sensor prices dropped, others followed suit.

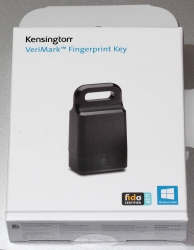

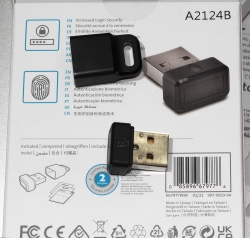

So, I went looking for a suitable product. This is the one I ended up with:

A note: I do love their "brief" product naming! ![]()

It is a Kensington® VeriMark™ Fingerprint Key supporting Windows Hello™ and FIDO U2F for universal 2nd-factor authentication. Pricing for one is reasonable, I paid 50€ for it. As I do own other types of USB/Bluetooth security devices, what they're asking for one is on par with market. I personally wouldn't want a security device which would be "cheapest on the market". I'd definitely go for a higher price range. My thinking is, this would be the appropriate price range for these devices.

Second note: Yes, I ended up buying a security device from company whose principal market on mechanical locks.

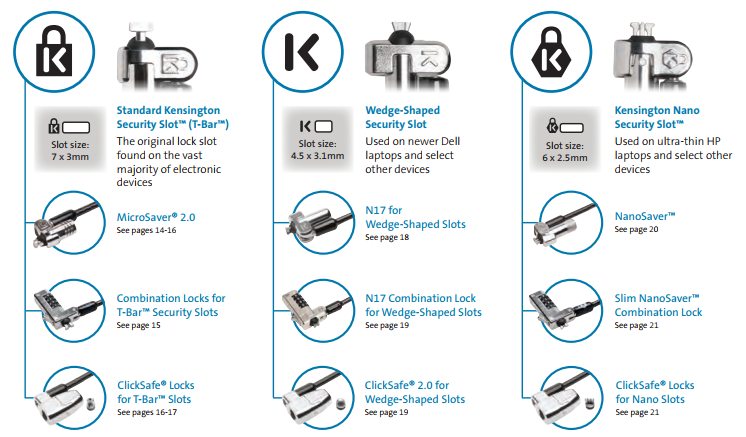

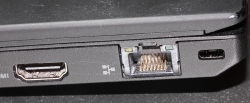

Here is one of those lock slots on the corner of my T570:

From left to right, there is a HDMI-port, Ethernet RJ-45 and a Kensington lock slot. You could bolt the laptop into a suitable physical object making the theft of the device really hard. Disclaimer: Any security measure can be defeated, given enough time.

Back to the product. Here is what's in the box:

That would be a very tiny USB-device. Similar sized items would be your Logitech mouse receiver or smallest WiFi dongles.

With a Linux running lsusb, following information can be retrieved:

Bus 001 Device 006: ID 06cb:0088 Synaptics, Inc.

Doing the verbose version lsusb -s 1:6 -vv, tons more is made available:

Bus 001 Device 006: ID 06cb:0088 Synaptics, Inc.

Device Descriptor:

bLength 18

bDescriptorType 1

bcdUSB 2.00

bDeviceClass 255 Vendor Specific Class

bDeviceSubClass 16

bDeviceProtocol 255

bMaxPacketSize0 8

idVendor 0x06cb Synaptics, Inc.

idProduct 0x0088

bcdDevice 1.54

iManufacturer 0

iProduct 0

iSerial 1 -redacted-

bNumConfigurations 1

Configuration Descriptor:

bLength 9

bDescriptorType 2

wTotalLength 0x0035

bNumInterfaces 1

bConfigurationValue 1

iConfiguration 0

bmAttributes 0xa0

(Bus Powered)

Remote Wakeup

MaxPower 100mA

Interface Descriptor:

bLength 9

bDescriptorType 4

bInterfaceNumber 0

bAlternateSetting 0

bNumEndpoints 5

bInterfaceClass 255 Vendor Specific Class

bInterfaceSubClass 0

bInterfaceProtocol 0

iInterface 0

Endpoint Descriptor:

bLength 7

bDescriptorType 5

bEndpointAddress 0x01 EP 1 OUT

bmAttributes 2

Transfer Type Bulk

Synch Type None

Usage Type Data

wMaxPacketSize 0x0040 1x 64 bytes

bInterval 0

Endpoint Descriptor:

bLength 7

bDescriptorType 5

bEndpointAddress 0x81 EP 1 IN

bmAttributes 2

Transfer Type Bulk

Synch Type None

Usage Type Data

wMaxPacketSize 0x0040 1x 64 bytes

bInterval 0

Endpoint Descriptor:

bLength 7

bDescriptorType 5

bEndpointAddress 0x82 EP 2 IN

bmAttributes 2

Transfer Type Bulk

Synch Type None

Usage Type Data

wMaxPacketSize 0x0040 1x 64 bytes

bInterval 0

Endpoint Descriptor:

bLength 7

bDescriptorType 5

bEndpointAddress 0x83 EP 3 IN

bmAttributes 3

Transfer Type Interrupt

Synch Type None

Usage Type Data

wMaxPacketSize 0x0008 1x 8 bytes

bInterval 4

Endpoint Descriptor:

bLength 7

bDescriptorType 5

bEndpointAddress 0x84 EP 4 IN

bmAttributes 3

Transfer Type Interrupt

Synch Type None

Usage Type Data

wMaxPacketSize 0x0010 1x 16 bytes

bInterval 10

Device Status: 0x0000

(Bus Powered)

So, this "Kensington" device is ultimately something Synaptics made. Synaptics have a solid track-record with biometrics and haptic input, so I should be safe with the product of my choice here.

For non-Windows users, the critical thing worth mentioning here is: There is no Linux support. There is no macOS support. This is only for Windows. Apparently you can go back to Windows 7 even, but sticking with 10 or 11 should be fine. A natural implication for being Windows-only leads us to following: Windows Hello is mandatory (I think you should get the hint from the product name already).

Without biometrics, I kinda catch the idea with Windows Hello. You can define a 123456-style PIN to log into your device, something very simple for anybody to remember. It's about physical proximity, you need to enter the PIN into the device, won't work over network. So, that's kinda ok(ish), but with biometrics Windows Hello kicks into a high gear. What I typically do, is define a rather complex alphanumeric PIN to my Windows and never use it again. Once you go biometrics, you won't be needing the password. Simple!

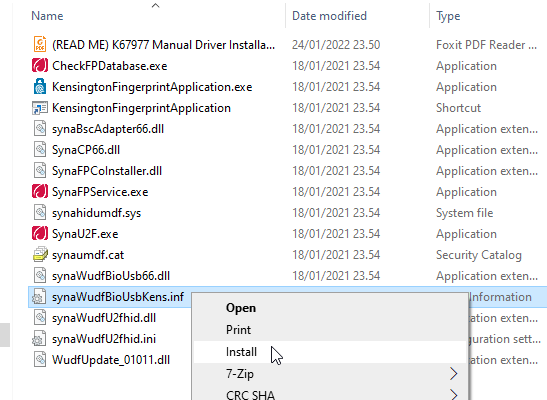

Back to the product. As these Kensington-people aren't really software-people, for installation they'll go with the absolutely bare minimum. There is no setup.exe or something any half-good Windows developer would be able to whip up. A setup which would execute pnputil -i -a synaWudfBioUsbKens.inf with free-of-charge tools like WiX would be rather trivial to do. But noooo. Nothing that fancy! They'll just provide a Zip-file of Synaptics drivers and instruct you to right click on the .inf-file:

To Windows users not accustomed to installing device drivers like that, it is a fast no-questions-asked -style process resulting in a popup:

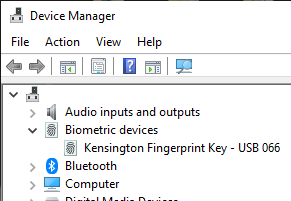

When taking a peek into Device Manager:

My gaming PC has a biometric device in it! Whoo!

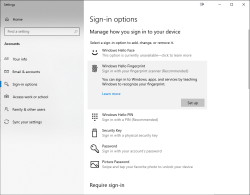

Obviously this isn't enough. Half of the job is done now. Next half is to train some of my fingers to the reader. Again, this isn't Apple, so user experience (aka. UX) is poor. There seems not to be a way to list trained fingers or remove/update them. I don't really understand the reasoning for this sucky approach by Microsoft. To move forward with this, go to Windows Settings and enable Windows Hello:

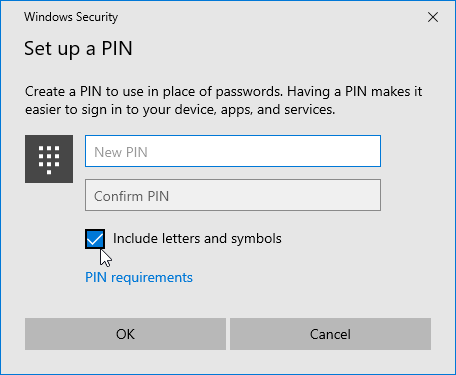

During the setup-flow of Windows Hello, you'll land at the crucial PIN-question:

Remeber to Include letters and symbols. You don't have to stick with just numbers! Of course, if that suits your needs, you can.

After that you're set! Just go hit ⊞ Win+L to lock your computer. Test how easy it is to log back in. Now, when looking at my G-513 it has the required feature my iPhone 7, MBP and Lenovo has:

Nicely done!

Fedora 35: Name resolver fail - Solved!

Monday, April 25. 2022

One of my boxes started failing after working pretty ok for years. On dnf update it simply said:

Curl error (6): Couldn't resolve host name for https://mirrors.fedoraproject.org/metalink?repo=fedora-35&arch=x86_64 [Could not resolve host: mirrors.fedoraproject.org]

Whoa! Where did that come from?

This is one of my test / devel / not-so-important boxes, so it might have been broken for a while. I simply haven't realized it ever happening.

Troubleshooting

Step 1: Making sure DNS works

Test: dig mirrors.fedoraproject.org

Result:

;; ANSWER SECTION:

mirrors.fedoraproject.org. 106 IN CNAME wildcard.fedoraproject.org.

wildcard.fedoraproject.org. 44 IN A 185.141.165.254

wildcard.fedoraproject.org. 44 IN A 152.19.134.142

wildcard.fedoraproject.org. 44 IN A 18.192.40.85

wildcard.fedoraproject.org. 44 IN A 152.19.134.198

wildcard.fedoraproject.org. 44 IN A 38.145.60.21

wildcard.fedoraproject.org. 44 IN A 18.133.140.134

wildcard.fedoraproject.org. 44 IN A 209.132.190.2

wildcard.fedoraproject.org. 44 IN A 18.159.254.57

wildcard.fedoraproject.org. 44 IN A 38.145.60.20

wildcard.fedoraproject.org. 44 IN A 85.236.55.6

Conclusion: Network works (obviously, I just SSHd into the box) and capability of doing DNS-requests works ok.

Step 2: What's in /etc/resolv.conf?

Test: cat /etc/resolv.conf

Result:

options edns0 trust-ad

; generated by /usr/sbin/dhclient-script

nameserver 185.12.64.1

nameserver 185.12.64.2

Test 2: ls -l /etc/resolv.conf

Result:

lrwxrwxrwx. 1 root root 39 Apr 25 20:31 /etc/resolv.conf -> ../run/systemd/resolve/stub-resolv.conf

Conclusion: Well, well. dhclient isn't supposed to overwrite /etc/resolv.conf if systemd-resolver is used. But is it?

Step 3: Is /etc/nsswitch.conf ok?

Previous one gave hint something was not ok. Just to cover all the bases, need to verify NS switch order.

Test: cat /etc/nsswitch.conf

Result:

# Generated by authselect on Wed Apr 29 05:44:18 2020

# Do not modify this file manually.

# If you want to make changes to nsswitch.conf please modify

# /etc/authselect/user-nsswitch.conf and run 'authselect apply-changes'.

d...

# In order of likelihood of use to accelerate lookup.

hosts: files myhostname resolve [!UNAVAIL=return] dns

Conclusion: No issues there, all ok.

Step 4: Is systemd-resolved running?

Test: systemctl status systemd-resolved

Result:

● systemd-resolved.service - Network Name Resolution

Loaded: loaded (/usr/lib/systemd/system/systemd-resolved.service; enabled; vendor pr>

Active: active (running) since Mon 2022-04-25 20:15:59 CEST; 7min ago

Docs: man:systemd-resolved.service(8)

man:org.freedesktop.resolve1(5)

https://www.freedesktop.org/wiki/Software/systemd/writing-network-configurat>

https://www.freedesktop.org/wiki/Software/systemd/writing-resolver-clients

Main PID: 6329 (systemd-resolve)

Status: "Processing requests..."

Tasks: 1 (limit: 2258)

Memory: 8.5M

CPU: 91ms

CGroup: /system.slice/systemd-resolved.service

└─6329 /usr/lib/systemd/systemd-resolved

Conclusion: No issues there, all ok.

Step 5: If DNS resolution is there, can systemd-resolved do any resolving?

Test: systemd-resolve --status

Result:

Global

Protocols: LLMNR=resolve -mDNS -DNSOverTLS DNSSEC=no/unsupported

resolv.conf mode: stub

Link 2 (eth0)

Current Scopes: LLMNR/IPv4 LLMNR/IPv6

Protocols: -DefaultRoute +LLMNR -mDNS -DNSOverTLS DNSSEC=no/unsupported

Test 2: resolvectl query mirrors.fedoraproject.org

Result:

mirrors.fedoraproject.org: resolve call failed: No appropriate name servers or networks for name found

Conclusion: Problem found! systemd-resolved and dhclient are disconnected.

Fix

Edit file /etc/systemd/resolved.conf, have it contain Hetzner recursive resolvers in a line:

DNS=185.12.64.1 185.12.64.2

Make changes effective: systemctl restart systemd-resolved

Test: resolvectl query mirrors.fedoraproject.org

As everything worked and correct result was returned, verify systemd-resolverd status: systemd-resolve --status

Result now:

Global

Protocols: LLMNR=resolve -mDNS -DNSOverTLS DNSSEC=no/unsupported

resolv.conf mode: stub

Current DNS Server: 185.12.64.1

DNS Servers: 185.12.64.1 185.12.64.2

Link 2 (eth0)

Current Scopes: LLMNR/IPv4 LLMNR/IPv6

Protocols: -DefaultRoute +LLMNR -mDNS -DNSOverTLS DNSSEC=no/unsupported

Success!

Why it broke? No idea.

I just hate these modern Linuxes where every single thing gets more layers of garbage added between the actual system and admin. Ufff.

Databricks CentOS 8 stream containers

Monday, February 7. 2022

Last November I created CentOS 8 -based Databricks containers.

At the time of tinkering with them, I failed to realize my base was off. I simply used the CentOS 8.4 image available at Docker Hub. On later inspection that was a failure. Even for 8.4, the image was old and was going to be EOLd soon after. Now that 31st Dec -21 had passed I couldn't get any security patches into my system. To put it midly: that's bad!

What I was supposed to be using, was the CentOS 8 stream image from quay.io. Initially my reaction was: "What's a quay.io? Why would I want to use that?"

Thanks Merriam-Webster for that, but it doesn't help.

On a closer look, it looks like all RedHat container -stuff is not at docker.io, they're in quay.io.

Simple thing: update the base image, rebuild all Databricks-images and done, right? Yup. Nope. The images built from steam didn't work anymore. Uff! They failed working that bad, not even Apache Spark driver was available. No querying driver logs for errors. A major fail, that!

Well. Seeing why driver won't work should be easy, just SSH into the driver an take a peek, right? The operation is documented by Microsoft at SSH to the cluster driver node. Well, no! According to me and couple of people asking questions like How to login SSH on Azure Databricks cluster, it is NOT possible to SSH into Azure Databricks node.

Looking at Azure Databricks architecture overview gave no clues on how to see inside of a node. I started to think nobody had ever done it. Also enabling diagnostic logging required the premium (high-prized) edition of Databricks, which wasn't available to me.

At this point I was in a full whatta-hell-is-going-on!? -mode.

Digging into documentation, I found out, it was possible to run a Cluster node initialization scripts, then I knew what to do next. As I knew it was possible to make requests into the Internet from a job running in a node, I could write an intialization script which during execution would dig me a SSH-tunnel from the node being initialized into something I would fully control. Obiviously I chose one of my own servers and from that SSH-tunneled back into the node's SSH-server. Double SSH, yes, but then I was able to get an interactive session into the well-protected node. An interactive session is what all bad people would want into any of the machines they'll crack into. Tunneling-credit to other people: quite a lot of my implementation details came from How does reverse SSH tunneling work?

To implement my plan, I crafted following cluster initialization script:

LOG_FILE="/dbfs/cluster-logs/$DB_CLUSTER_ID-init-$(date +"%F-%H:%M").log"

exec >> "$LOG_FILE"

echo "$(date +"%F %H:%M:%S") Setup SSH-tunnel"

mkdir -p /root/.ssh

cat > /root/.ssh/authorized_keys <<EOT

ecdsa-sha2-nistp521 AAAAE2V0bV+TrsFVcsA==

EOT

echo "$(date +"%F %H:%M:%S") Install and setup SSH"

dnf install openssh-server openssh-clients -y

/usr/libexec/openssh/sshd-keygen ecdsa

/usr/libexec/openssh/sshd-keygen rsa

/usr/libexec/openssh/sshd-keygen ed25519

/sbin/sshd

echo "$(date +"%F %H:%M:%S") - Add p-key"

cat > /root/.ssh/nobody_id_ecdsa <<EOT

-----BEGIN OPENSSH PRIVATE KEY-----

b3BlbnNzaC1rZXktdjEAAAAABG5vbmUAAAAEbm9uZQAAAAAAAAABAAAA

1zaGEyLW5pc3RwNTIxAAAACG5pc3RwNTIxAAAAhQQA2I7t7xx9R02QO2

rsLeYmp3X6X5qyprAGiMWM7SQrA1oFr8jae+Cqx7Fvi3xPKL/SoW1+l6

Zzc2hkQHZtNC5ocWNvZGVzaG9wLmZpAQIDBA==

-----END OPENSSH PRIVATE KEY-----

EOT

chmod go= /root/.ssh/nobody_id_ecdsa

echo "$(date +"%F %H:%M:%S") - SSH dir content:"

echo "$(date +"%F %H:%M:%S") Open SSH-tunnel"

ssh -f -N -T \

-R22222:localhost:22 \

-i /root/.ssh/nobody_id_ecdsa \

-o StrictHostKeyChecking=no \

nobody@my.own.box.example.com -p 443

Note: Above ECDSA-keys have been heavily shortened making them invalid. Don't copy passwords or keys from public Internet, generate your own secrets. Always! And if you're wondering, the original keys have been removed.

Note 2: My init-script writes log into DBFS, see exec >> "$LOG_FILE" about that.

My plan succeeded. I got in, did the snooping around and then it took couple minutes when Azure/Databrics -plumbing realized driver was dead, killed the node and retried the startup-sequence. Couple minutes was plenty of time to eyeball /databricks/spark/logs/ and /databricks/driver/logs/ and deduce what was going on and what was failing.

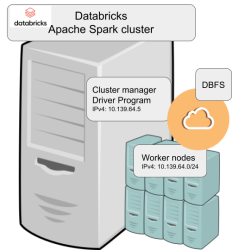

Looking at simplified Databricks (Apache Spark) architecture diagram:

Spark driver failed to start because it couldn't connect into cluster manager. Subsequently, cluster manager failed to start as ps-command wasn't available. It was in good old CentOS, but in base stream it was removed. As I got progress, also ip-command was needed. I added both and got the desired result: a working CentOS 8 stream Spark-cluster.

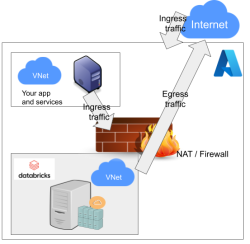

Notice how I'm specifying HTTPS-port (TCP/443) in the outgoing SSH-command (see: -p 443). In my attempts to get a session into the node, I deduced following:

As Databricks runs in it's own sandbox, also outgoing traffic is heavily firewalled. Any attempts to access SSH (TCP/22) are blocked. Both HTTP and HTTPS are known to work as exit ports, so I spoofed my SSHd there.

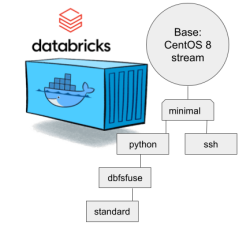

There are a number of different containers. To clarify which one to choose, I drew this diagram:

In my sparking, I'll need both Python and DBFS, so my choice is dbfsfuse. Most users would be happy with standard, but it only adds SSHd which is known not to work. ssh has the same exact problem. The reason for them to exist, is because in AWS SSHd does work. Among the changes from good old CentOS into stream is lacking FUSE. Old one had FUSE even in minimal, but not anymore. You can access DBFS only with dbfsfuse or standard from now on.

If you want to take my CentOS 8 brick-containers for a spin, they are still here: https://hub.docker.com/repository/docker/kingjatu/databricks, now they are maintained and get security patches too!

pkexec security flaw (CVE-2021-4034)

Wednesday, January 26. 2022

This is something Qualsys found: PwnKit: Local Privilege Escalation Vulnerability Discovered in polkit’s pkexec (CVE-2021-4034)

In your Linux there is sudo and su, but many won't realize you also have pkexec which does pretty much the same. More details about the vulnerability can be found at Vuldb.

I downloaded the proof-of-concept by BLASTY, but found it not working in any of my systems. I simply could not convert a nobody into a root.

Another one, https://github.com/arthepsy/CVE-2021-4034, states "verified on Debian 10 and CentOS 7."

Various errors I could get include a simple non-functionality:

[~] compile helper..

[~] maybe get shell now?

The value for environment variable XAUTHORITY contains suscipious content

This incident has been reported.

Or one which would stop to ask for root password:

[~] compile helper..

[~] maybe get shell now?

==== AUTHENTICATING FOR org.freedesktop.policykit.exec ====

Authentication is needed to run `GCONV_PATH=./lol' as the super user

Authenticating as: root

By hitting enter to the password-prompt following will happen:

Password:

polkit-agent-helper-1: pam_authenticate failed: Authentication failure

==== AUTHENTICATION FAILED ====

Error executing command as another user: Not authorized

This incident has been reported.

Or one which simply doesn't get the thing right:

[~] compile helper..

[~] maybe get shell now?

pkexec --version |

--help |

--disable-internal-agent |

[--user username] [PROGRAM] [ARGUMENTS...]

See the pkexec manual page for more details.

Report bugs to: http://lists.freedesktop.org/mailman/listinfo/polkit-devel

polkit home page: <http://www.freedesktop.org/wiki/Software/polkit>

Maybe there is a PoC which would actually compromise one of the systems I have to test with. Or maybe not. Still, I'm disappointed.

Databricks CentOS 8 containers

Wednesday, November 17. 2021

In my previous post, I mentioned writing code into Databricks.

If you want to take a peek into my work, the Dockerfiles are at https://github.com/HQJaTu/containers/tree/centos8. My hope, obviously, is for Databricks to approve my PR and those CentOS images would be part of the actual source code bundle.

If you want to run my stuff, they're publicly available at https://hub.docker.com/r/kingjatu/databricks/tags.

To take my Python-container for a spin, pull it with:

docker pull kingjatu/databricks:python

And run with:

docker run --entrypoint bash

Databricks container really doesn't have an ENTRYPOINT in them. This atypical configuration is because Databricks runtime takes care of setting everything up and running the commands in the container.

As always, any feedback is appreciated.